Introduction to Private LLMs

What is a Private LLM?

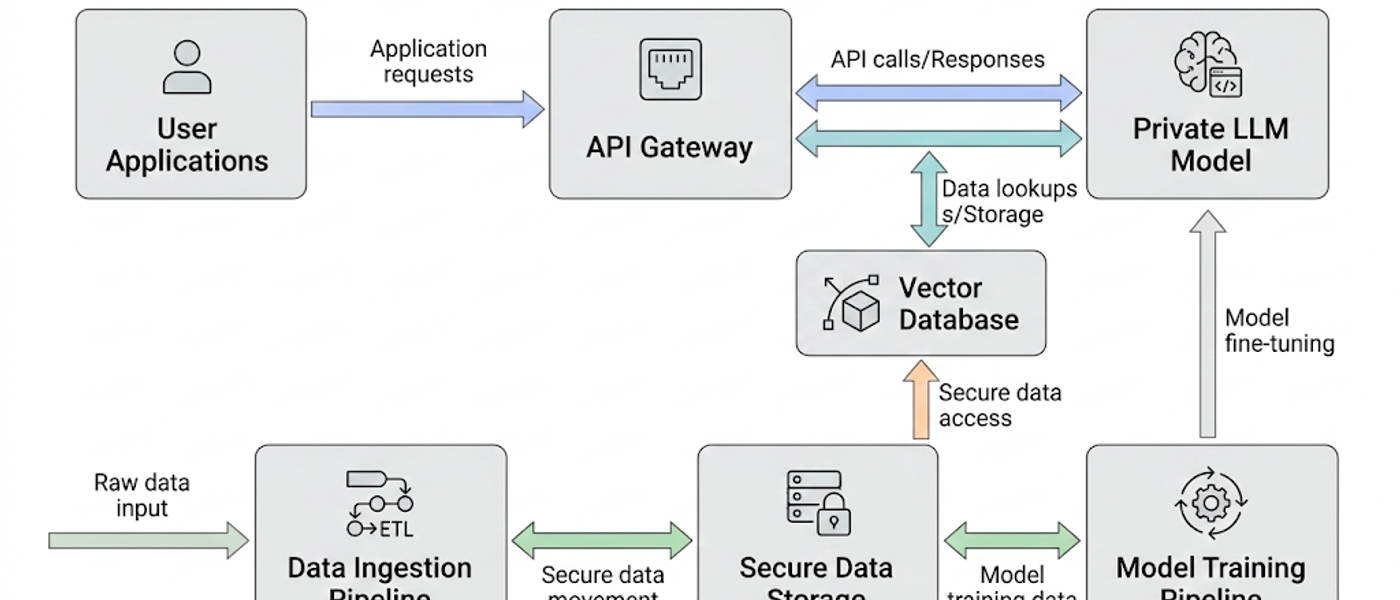

A Private LLM, or Private Large Language Model, refers to a machine learning model that is trained on proprietary data and hosted on secure infrastructure, ensuring that sensitive information remains confidential and under the control of the organization. Unlike public LLMs, which are accessible to anyone and trained on publicly available datasets, private LLMs are tailored to meet specific business needs and can be customized for various applications.

Importance of Private LLMs in Today's Business Landscape

In today's business landscape, the importance of Private LLMs cannot be overstated. Organizations are increasingly relying on AI to enhance their operations, improve customer engagement, and drive innovation. Private LLMs provide the advantage of customized solutions that align with organizational goals while ensuring compliance with data privacy regulations. They empower businesses to harness the full potential of AI while maintaining control over their data.

Infrastructure Options for Private LLM Deployment

On-Premises vs Cloud Solutions

When considering private LLM deployment, organizations face the choice between on-premises solutions and cloud-based infrastructure. On-premises deployments offer greater control and security, as data remains within the organization's physical environment. However, they often require significant upfront investment in hardware and ongoing maintenance. In contrast, cloud solutions provide scalability and flexibility, allowing organizations to pay for only the resources they use, but may raise concerns regarding data security and compliance.

Hybrid Deployment Models

Hybrid deployment models combine the best of both worlds by allowing organizations to leverage cloud resources for scalability while keeping sensitive data on-premises. This approach can optimize performance, reduce costs, and enhance security. Organizations must evaluate their specific needs, regulatory requirements, and budget constraints to determine the most suitable infrastructure option for their private LLM deployment.

Cost Models for Private LLM Deployment

Understanding Total Cost of Ownership

Understanding the total cost of ownership (TCO) for private LLM deployment is crucial for organizations. TCO includes not only the initial capital expenditure for hardware and software but also ongoing operational costs such as maintenance, updates, and personnel. Additionally, organizations must consider costs associated with data storage, processing power, and potential cloud service fees if applicable.

Budgeting for Infrastructure and Maintenance

Budgeting for infrastructure and maintenance requires a comprehensive approach. Organizations should allocate funds for initial setup, regular maintenance, and potential upgrades to ensure optimal performance. It's also essential to factor in training costs for staff who will manage and utilize the LLM, as well as any compliance-related costs that may arise from industry regulations.

Compliance Requirements for Industry-Specific Deployments

HIPAA Compliance for Healthcare Applications

For organizations in the healthcare sector, compliance with HIPAA (Health Insurance Portability and Accountability Act) is paramount when deploying private LLMs. This involves implementing stringent data security measures, ensuring that patient data is protected, and conducting regular audits to maintain compliance. Organizations must also train employees on HIPAA regulations and the importance of safeguarding sensitive health information.

FinTech Regulations and Data Security

In the FinTech industry, compliance with regulations such as GDPR (General Data Protection Regulation) and PCI DSS (Payment Card Industry Data Security Standard) is critical. These regulations dictate how financial data must be handled, stored, and processed. Organizations must implement robust data security measures, conduct risk assessments, and establish clear policies and procedures to ensure compliance during the deployment of private LLMs.

Deployment Patterns and Best Practices

Common Deployment Patterns

Common deployment patterns for private LLMs include phased, parallel, and full deployment. Phased deployment allows organizations to gradually introduce the model, minimizing risk and enabling adjustments based on initial feedback. Parallel deployment involves running the private LLM alongside existing systems to compare performance and ensure a smooth transition. Full deployment, while riskier, can be effective when organizations have confidence in the model's capabilities.

Best Practices for Successful Implementation

Best practices for successful implementation of private LLMs include thorough testing before deployment, continuous monitoring of performance, and gathering feedback from users. Organizations should also invest in training for end-users to maximize the model's effectiveness. Establishing a clear governance framework and defining roles and responsibilities can further enhance the success of the deployment.

Case Studies: Successful Private LLM Deployments

Healthcare Case Study

A notable healthcare case study involves a leading hospital network that implemented a private LLM to enhance patient triage processes. By training the model on historical patient data, the hospital improved the accuracy of patient assessments, reducing wait times and improving overall patient satisfaction. Key takeaways include the importance of integrating clinical expertise in model training and the value of continuous learning from real-time patient data.

FinTech Case Study

In the FinTech sector, a major financial institution leveraged a private LLM to automate customer service inquiries. By analyzing customer interactions, the model was able to provide accurate responses and improve response times significantly. Lessons learned include the necessity of maintaining compliance with financial regulations and the importance of user feedback in refining the model's performance.

Manufacturing Case Study

A manufacturing company adopted a private LLM for predictive maintenance, utilizing historical machine data to forecast potential failures. This proactive approach reduced downtime and maintenance costs. Key takeaways from this case study highlight the importance of data quality and the need for cross-departmental collaboration to ensure the model's success.

Performance Metrics for Evaluating LLMs

Key Performance Indicators (KPIs)

Key performance indicators (KPIs) for evaluating private LLMs include accuracy, response time, and user satisfaction. Organizations should establish clear benchmarks for these metrics to assess the model's effectiveness. Regular performance reviews can help identify areas for improvement and ensure that the LLM continues to meet organizational goals.

Comparative Analysis: Private vs Public LLMs

A comparative analysis of private vs public LLMs reveals significant differences in performance, particularly regarding data security and customization. While public LLMs may offer broader generalization capabilities, private LLMs provide tailored solutions that can outperform their public counterparts in specific applications. Evaluating these metrics is essential for organizations to make informed decisions regarding their AI strategy.

Security Considerations for Private LLMs

Implementing Security Frameworks

Implementing robust security frameworks is critical for the successful deployment of private LLMs. Organizations should adopt industry-standard practices such as encryption, access controls, and regular security audits to protect sensitive data. Adopting a zero-trust security model can further enhance protection against potential threats.

Data Privacy and Protection Measures

Data privacy and protection measures must be prioritized during the deployment of private LLMs. Organizations should ensure compliance with relevant data protection regulations and establish clear policies for data handling. Regular training for employees on data privacy best practices is essential to foster a culture of security within the organization.

Conclusion and Future Trends

Recap of Key Points

In conclusion, deploying private LLMs in production requires careful consideration of various factors, including infrastructure options, cost models, compliance requirements, and security measures. By following best practices and learning from real-world case studies, organizations can successfully implement private LLMs that drive efficiency and innovation.

The Future of Private LLMs in Various Industries

Looking ahead, the future of private LLMs appears promising as organizations continue to seek tailored AI solutions. Emerging trends such as increased focus on explainability, integration with other technologies, and advancements in AI ethics will shape the landscape of private LLM deployments across various industries.